What is the History of Contact Tracing

Contact tracing is seen as a way out of the recent COVID-19 pandemic, where it involves tracking individuals who may have come into contact with infected individuals who have the virus. While technology, particularly mobile phones, are seen as the key to making modern contact tracing work, in the past other forms of contact tracing were employed. Much of the current approach to contact tracing, in fact, is based on the historical development of this idea.

Early History

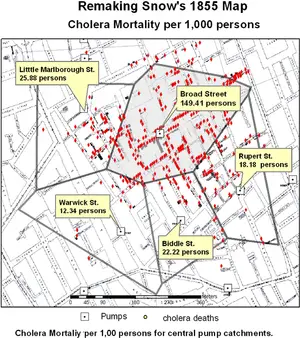

Contact tracing, that is determining who may have an infection and then determining who may come into contact with this person, is likely a more recent practice developed over the last two hundred years, but we have some historical evidence of partial attempts at least in the more distant paast. The earliest recorded evidence comes from the Medieval period, during the Black Death plague, when individuals who were known to have the plague were not only quarantined but their homes were marked. Often a cross or marker would be put so that everyone would be informed that a given house should be avoided. It was an early attempt at public health by trying to contain local outbreaks by helping those around an area where someone became infected to know they should avoid contact. This, of course, would have created problems for the infected individual, often sealing their fate, but it could have limited the spread of the plague to an extent. The main problem was there is no evidence any systematic mapping of infected individuals was practiced. One of the earliest efforts to map an outbreak of infection comes from John Snow, who helped track and map the source of a cholera outbreak in London in 1854 (Figure 1). His work discounted the idea that 'foul air' spread cholera and other infectious diseases, as mapping infected individuals demonstrated a district in Soho London, traced to a particular water pump on Broad street, was the source of the cholera outbreak. Simply talking to people, finding out when and where incidents of infection occurred, and then mapping that data helped to create among the first maps of a disease outbreak. This work made him one of the key founders of epidemiology and public health. Soon after these outbreaks, London's sanitation improved, with the pump replaced, and with the work also forming the basis of public health strategies that helped to diminish future outbreaks.[1]

While cholera was a major concern, other outbreaks of infectious diseases were shown to have a given source and spread. Contact tracing, whereby individuals infected were mapped so that the source was identified and isolated, was applied to other infectious disease as the late 19th century progressed. Tuberculosis (TB) was one of the biggest concerns during the rapid urbanization in the late 19th century. Cramped conditions and infected droplets made the spread of this disease rapid in the growing cities of Europe and North America. By the 1880s, mandatory reporting of TB was required in the United States and Europe so that public health officials could map and trace the location of outbreaks. This also then created an alert system for public health officials to warn people in affected areas that an outbreak was occurring. While response time was still relatively slow, which led to outbreaks not being effectively contained, the fact that public officials now more commonly mapped outbreaks allowed some time for at least some areas to better prepare against outbreaks and infected area to be isolated. By the 1880s, contact tracing was effectively developed as a primary public health strategy.[2]

Recent Developments

Contact tracing in the late 19th century was mostly related to the large-scale public health crises such as cholera or tuberculosis outbreaks. However, increasingly medical science in the early 20th century realized contact tracing has a positive effect on other forms of infections. Sexually transmitted diseases, which were not well studied prior to the early 20th century, increasingly were treated similar to other infectious disease. In Scotland and UK, during World War I and after, venereal disease became a public health concern. When contact tracing was deployed, it created many problems for those who were seen as passing the infections, mainly women, and the authorities. While initially in the 1920s and 1930s, contact tracing for venereal disease was seen as a way for the authorities to track criminal activity as well as to socially ostracise individuals, public health officials began to change course in the 1930s and 1940s, focusing on conducting detailed interviews with infected individuals and reconstructing sexual history of individuals so that a more clear history of transmission could be developed. This was seen as vital given that the war years in the 1940s could have led to a more rapid spread of disease with the presence of more soldiers in the UK during the period between 1940-1944. Nevertheless, contact tracing focusing on such disease often focused on restricting movements by infected or likely to be infected women, which was seen as the best way to prevent the spread of infection. In the United States, in the late 1930s syphilis was seen as a potential public health threat and the surgeon general Thomas Parran promoted contact tracing as a way to prevent its spread. Similarly, initially the program was difficult to accept socially and was also seen as a way for the authorities to control specific individuals, but by the late 1940s more controlled interviews and use of penicillin, that is penicillin distributed to people likely to get syphilis as well as those who had it, proved to be more successful. Rather than isolating individuals or publicly shaming them, the program simply distributed people in an area who could likely spread the infection. This helped to rapidly diminish syphilis cases by the 1950s in wide areas of the United States.[3]

Perhaps the most successful example of using contact tracing to diminish the effects of a disease is the example of smallpox, which was mostly eradicated by 1980 (Figure 2). This was mainly due to efforts of contact tracing, by isolating and treating infected individuals, and then also focus immunization efforts for people nearby, thereby focusing available resources to those who most urgently need immunization. The World Health Organization (WHO) perhaps established one of the largest contact tracing programs that now focused on the entire world. From the 1950s-1970s, contact tracing was used in developed and developing countries, where teams of volunteers, doctors, and individuals would go and interview people to determine the likely place in which an outbreak was present. This helped to track all major areas of smallpox that eventually led to its near eradication. The combination of a vaccine and contact tracing made this among the most successful, large-scale WHO efforts.[4]

Impact of Contact Tracing

Contact tracing has transformed public health and epidemiology since its inception. The most recent advances have to do with the use of mobile phone data. The 2014 Ebola outbreak and 2015 MERS outbreak shaped South Korea's and some other Asian countries' experience with a major viral outbreak. The use of mobile phones and tracing someone's whereabouts was used under powers issued to the government. This experience helped South Korea and other east Asian countries pioneer the use of such data to track how individuals movements may affect the transmission of an infectious disease. While this was used initially in the Ebola and MERS outbreaks, the use in the 2020 COVID-19 outbreak proved essential for South Korea, China, and other east Asian countries in limiting the overall impact of COVID-19. While the use of mobile data for Western states is only now being applied, it also remains controversial given concerns over location data and personal data sharing with government authorities. Nevertheless, it is clear from the history of contact tracing, even without the use of mobile phones, tracking someone's whereabouts and the likely community in which they could spread infection to is critical in reducing infection rates. The example of syphilis, cholera, tuberculosis, and smallpox all demonstrate this even without the use of mobile phones.[5]

Summary

Contact tracing has been seen as an essential public health strategy since the mid-19th century, when it was realized by John Snow that mapping infections and outbreaks could help reduce the overall outbreak or at least contain it. Public health officials realized this was not only true for cholera but using contact tracing for other infectious disease has proven useful in limiting their impact on the public. New technologies should allow more rapid response and tracing of individuals, helping to isolate local outbreak more rapidly before individuals infect a large number of people.

References

- ↑ For more on John Snow's work, see: Hempel, Sandra. The Medical Detective: John Snow, Cholera and the Mystery of the Broad Street Pump. London: Granta Books, 2007.

- ↑ For more on how contact tracing was used in the 19th century and TB, see: National Collaborating Centre for Chronic Conditions (Great Britain), and Royal College of Physicians of London. Tuberculosis: Clinical Diagnosis and Management of Tuberculosis, and Measures for Its Prevention and Control., 2006. http://www.ncbi.nlm.nih.gov/books/NBK45802/.

- ↑ For more on how venereal disease began to use contact tracing and controversies around this, see: Aral, Sevgi O., ed. Behavioral Interventions for Prevention and Control of Sexually Transmitted Diseases. 1. softcover print. New York, NY: Springer, 2008.

- ↑ For more on the success of smallpox contact tracing, see: McGuire-Wolfe, Christine. Foundations of Infection Control and Prevention. Burlington, MA: Jones & Bartlett Learning, 2018, pg. 11.

- ↑ For more on recent contact tracing technology and application, see: Mittelstadt, Daniel Brent. The Ethics of Biomedical Big Data. New York, NY: Springer Berlin Heidelberg, 2016, pg. 26.